Table of Contents

ToggleOver the last few years, Artificial Intelligence has become one of the most recognized and widely used technologies, not only by end users, but also by companies around the world. Its ability to quickly solve complex problems and automate tasks has put this technology in the spotlight, to such an extent that consulting firms such as McKinsey, in their report “State of AI in 2025,” already point out that 88% of companies use Artificial Intelligence in at least one function of their business.

However, the unstoppable advance of this technology has also attracted the attention of cybercriminals, who have found in it a way to carry out increasingly sophisticated and complicated attempts at impersonation and identity theft, which has a significant economic impact on their victims, but also on public and private entities. In fact, Deloitte’s projections indicate that fraud caused by artificial intelligence will have an impact of more than $40 billion worldwide by 2027, a figure that reveals its importance to the global economy.

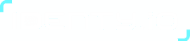

From fraud using masks and photos to deepfakes

How is AI used to carry out these frauds and identity thefts? Until now, criminals have used what are known as presentation attacks, in which they use a high-definition printed photograph, a silicone mold to replicate the victim’s fingerprint with great precision, or simply a mask to try to bypass security filters. However, the emergence of AI has also led to the appearance of what are known as deepfakes, digitally generated biometric forgeries that emulate facial features or voices with great realism.

Deep neural networks are used to create these digital doubles with the aim of circumventing any protective measures. According to the “Human Detection of Deepfakes: An Investigation of Confidence and Accuracy” report, the human eye is only capable of detecting 24.5% of deepfakes, which means that seven out of ten go unnoticed. Not even processes in which identity verification is carried out via video calls are safe, due to AI’s ability to inject these deepfakes into real-time video streams, for example.

Technology has become so sophisticated that images generated by this technology are capable of blinking or performing predefined movements, so that even detection by liveness verification systems—that is, systems used by many entities in their onboarding process to verify that the user is real by asking them to move their head in a certain direction or blink in a specific way—does not offer excessive guarantees. And the consequences could have a far-reaching impact, allowing the opening of fraudulent bank accounts, unauthorized transactions, withdrawals from retirement funds, or the acquisition of medical treatments that belong to another user.

One high-profile example: in early 2024, a finance employee at a Hong Kong-based firm was deceived by a deepfake video call impersonating her head of finance and several colleagues, leading her to authorize a transfer of $25 million USD to fraudsters. Real-world example grounds the threat in a concrete, verifiable incident. The Hong Kong deepfake fraud (5M, January 2024) was widely covered by Reuters, BBC, and financial press. Concrete cases land harder with a banking audience than abstract descriptions.

AI as part of the solution to deepfakes and identity theft

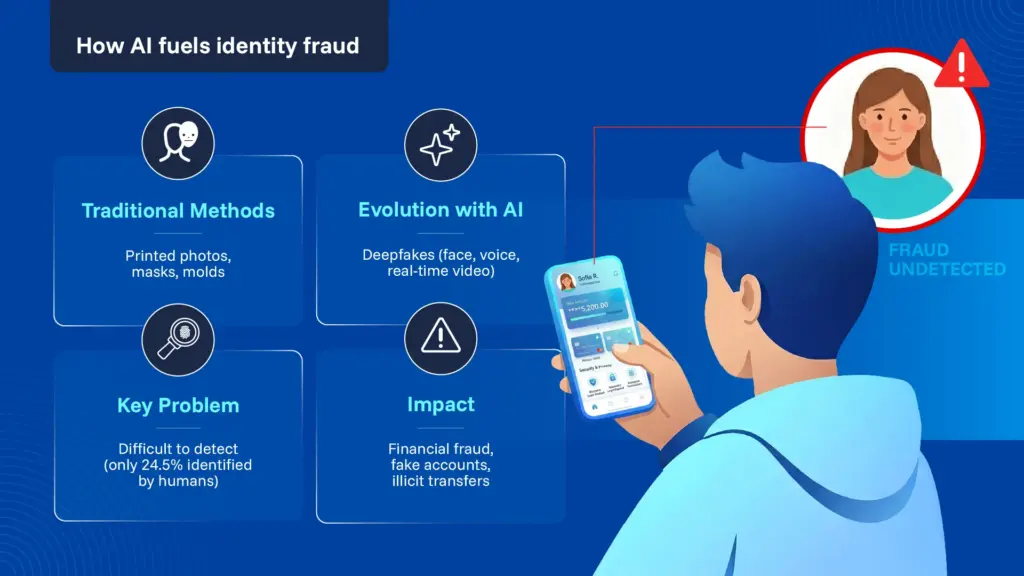

While the technology used by criminals has evolved rapidly, so too has the technology used by entities such as Identy.io to prevent theft and identity theft. One of the most important in this regard is passive liveness detection, a solution designed specifically to detect cases in which a third party is attempting to breach the security systems implemented by a public or private entity and use this identity fraud for fraudulent purposes.

How does passive liveness testing work? This technology does not require the user to interact directly with the system. In other words, it does not need the user to perform movements predefined by the system in order to differentiate between a real user and a digital double. The passive variant runs in the background, attempting to detect signs of life in a single frame or video sequence, using AI models trained to detect nuances in skin texture, lighting, facial micro-movements, or natural responses in the user.

So, is it necessary to do away with systems that use fingerprints as the sole security measure? No. The truth is that fingerprint biometrics is recognized as a reliable form of authentication, although with the evolution of technology, it is increasingly common to find multimodal or multi-factor forms of authentication, in which passive liveness testing using facial features becomes an additional layer of security against fraud. This is especially true considering that many modern fingerprint sensors already incorporate their own liveness tests, detecting sweat on the skin or pulse, for example.

The technology developed by Identy.io, in addition to featuring the latest advances in passive liveness detection, has the advantage of processing all of the user’s personal and biometric information directly on their mobile device, thus avoiding the need for expensive biometric scanners and trips to their locations. With just a mobile phone equipped with a simple camera and flash, the system is able to detect the user’s fingerprints or face, carry out the necessary passive life sign checks, and store the user’s personal information on the same mobile phone, under the highest encryption standards, completely safe from possible attacks or identity theft.

Identy.io’s passive liveness detection has been independently certified to NIST ISO 30107-3 Level 2 — the industry’s most rigorous Presentation Attack Detection standard — achieving a perfect score. This certification is particularly relevant for banking and fintech institutions that must demonstrate compliance with increasingly stringent KYC and AML regulations, and for telecom operators facing SIM swap fraud and subscriber identity verification at onboarding.

As we can see, at a time when artificial intelligence has become an ever-evolving threat, the same technology also becomes a guarantee that the user’s personal data will be properly protected and safe from the use of deepfakes or digital doubles.