Table of Contents

ToggleWhat is a biometric deepfake?

A biometric deepfake is an AI-generated fake that imitates a person’s biometric data (typically facial images or voice), often with startling realism. Using deep neural networks, attackers can create synthetic faces or videos that closely mimic a real user’s appearance and expressions. These forgeries pose a serious threat to facial recognition systems and identity verification processes. Human eyes and even standard algorithms struggle to tell high-quality deepfakes apart from genuine footage, in one study, people only spotted deepfake videos 24.5% of the time.

This means 75% of deepfakes went unnoticed, underscoring how easily they could slip through unsophisticated defenses. Impact on facial recognition and liveness detection: Traditional face recognition matches a selfie to an ID photo, assuming the selfie is real. Deepfakes undermine this by presenting an AI-manipulated live image or video of someone’s face. Unlike old-school spoofs (e.g. a printed photo or mask), deepfakes are dynamic ,the fake face can blink, move and even respond to prompts, fooling basic liveness detection. Liveness algorithms are designed to ensure a face is captured from a live person (not a still image or replay), often by checking for natural motions or 3D depth. However, earlier generations of liveness checks (like asking users to blink or turn their head) are easily duped by today’s deepfake tech.

For example, AI can now generate a video of a face that blinks and moves on command. If a fraudster feeds a deepfake video through a virtual camera app into a verification system, an unprepared biometric check might accept it as “live.” This puts digital onboarding and remote identity proofing at serious risk. Fintech providers, banks, and even government portals that rely on selfie-based verification have seen that deepfake attacks can slip through, impersonating victims despite multi-layer security.

The result can be fraudulent account openings, unauthorized transactions, or fake identities passing Know-Your-Customer (KYC) and anti-money laundering (AML) checks.

Deepfakes are becoming more sophisticated, increasing the risk of fraud and identity manipulation in digital environments. Download our 10-step guide to learn how to detect threats early and protect your organization with proven best practices.

Deepfake: From traditional spoofing to AI-Driven attacks

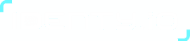

Biometric spoofing vs deepfake-based attacks: In the past, the main concern was presentation attacks using physical props or recorded media. Fraudsters might hold up a person’s photo to the camera, wear a lifelike mask, or replay a video of the victim. Liveness detection was introduced to defeat these tricks, for instance, by detecting flat images or requiring interactive movements. Deepfakes, however, up the ante.

They are not just stolen recordings but synthetic media generated by AI, often able to emulate subtle facial movements and behaviors. A well-crafted deepfake can appear more convincing than a static photo, and without advanced checks it may bypass systems that only look for simple signs of life. In essence, deepfakes are a sophisticated extension of biometric spoofing: instead of a crude fake (like a photo printout), the attacker presents a forged “live” persona. This has forced a shift in security strategies. Standard face recognition solutions by themselves cannot reliably distinguish a real face from an AI-generated impostor. Even many liveness systems that aren’t up-to-date fail to catch high-quality deepfakes or 3D masks. The industry is learning that traditional biometric authentication is vulnerable to AI-driven spoofs. To address this, security teams are turning to dual defenses: biometric liveness + deepfake detection in tandem.

Traditional spoofing versus biometric deepfake

Why Liveness detection isn’t enough (Alone)

Liveness detection remains vital, it checks that there is a live person in front of the camera, not just an artifact. Techniques range from active prompts (blink, smile, turn your head) to passive analysis (machine learning analyzing skin texture, reflections, and 3D depth from a short video). Liveness can catch many fake presentations. For example, certified 3D liveness systems can detect that a deepfake video is ultimately a 2D projection with odd lighting ,a screen emits light differently than a real face reflects it, which a good system will flag.

However, liveness alone may not catch every AI-generated fake, especially as deepfakes improve. This is why experts now emphasize combining liveness detection with deepfake detection algorithms. The two approaches complement each other: liveness confirms there’s a real human attempt, while deepfake detection analyzes if that “human” face itself might be computer-generated or manipulated. The importance of combining approaches: Deepfake detection tools use AI to scrutinize visual and audio input for telltale signs of tampering ,things like unnatural facial movements, inconsistent lip-sync, distortions in the image, or glitches in texture and lighting. These are clues a face might be synthetically altered. By layering this analysis on top of liveness checks, a platform can verify both presence (live human vs. artifact) and authenticity (real person vs. deepfake).

In high-security environments like banking, biometric security platforms with deepfake protection have become essential. Industry leaders predict that deepfake detection will go from a novelty to standard “security hygiene” in the next few years. In fact, regulators are already moving in this direction. The latest U.S. NIST Digital Identity guidelines explicitly include requirements to defend against deepfakes and other AI-generated attacks. Likewise, third-party labs now test vendors for “injection attack” resistance (meaning resilience to injected deepfake videos or synthetic images) as part of biometric system certification. This all underscores that a single defense layer is no longer sufficient. Only a multi-layered approach ,document verification, face matching, liveness, and deepfake detection together, can reliably thwart AI-driven identity fraud.

Learn how Identy.io strengthened mobile security in the banking sector with advanced fingerprint biometrics and seamless user experience.

Threats to Fintech, Banking and Goverment Onboarding

Digital onboarding in fintech and government services has surged, and so have attempts at deepfake identity fraud. Online banks and lenders, for instance, use selfie biometrics to verify new customers quickly. But criminals are exploiting this convenience. Real-world deepfake biometric testing has exposed alarming vulnerabilities. In one case, an Indonesian financial institution discovered over 1,100 deepfake attempts to bypass its remote onboarding in 2024. Fraudsters obtained stolen ID data and then used AI to generate new selfies matching the IDs, effectively impersonating the victims. These deepfakes fooled the bank’s facial recognition and basic liveness checks, allowing fake loan applications to go through. The scheme was only uncovered after advanced analysis, but it highlighted a potential $138+ million in losses had those accounts been fully exploited. This example illustrates how a deepfake bypassing KYC is not a theoretical worry ,it’s happening now, at scale, in fintech markets.

Government and regulatory bodies are taking notice. In the United States, the Treasury’s Financial Crimes Enforcement Network (FinCEN) issued an alert in late 2024 about fraud schemes using deepfakes. FinCEN warned that malign actors may use synthetic IDs and even real-time deepfake videos to evade live verification checks during customer identification. In one noted scheme, applicants tried to open bank accounts with AI-generated passport photos and claimed technical issues during live video checks to cover for the deepfake’s glitches. Such incidents raise red flags for AML compliance ,if banks cannot reliably vet real identities, criminals might create accounts to launder money or defraud systems. Beyond banking, government agencies that provide digital services (from tax portals to social benefits enrollment) face similar risks. A deepfake could allow someone to hijack an identity and access another person’s records or entitlements, undermining the integrity of e-government programs.

The YouTube deepfake tool example: Even mainstream tech platforms are grappling with this threat. Recently, YouTube launched a biometric verification tool for creators to detect and remove AI-manipulated videos that misuse their likeness. This move made news as a double-edged sword: it’s a response to deepfake abuse, but it raised concerns since YouTube’s policy technically allows the biometric data collected (creators’ face scans) to be used for AI training. For fintech and government leaders, the takeaway is clear, if a giant like YouTube feels compelled to roll out a deepfake detection mechanism, the threat is widespread. And if “YouTube biometric verification deepfake tool” headlines are appearing in the news, it means deepfake risks have entered public awareness. Unfortunately, the same accessible tools that let creators combat deepfakes can be repurposed by fraudsters. The internet is rife with tutorials on how to create convincing deepfakes; on YouTube itself, there are in-depth guides to generate face swaps using software like DeepFaceLab. This democratization of deepfake technology means even relatively low-skilled attackers can attempt deepfake-driven identity fraud. Fintechs and banks must assume that some percentage of their onboarding attempts will be AI-assisted fakes and prepare accordingly.

Best practices against deepfake

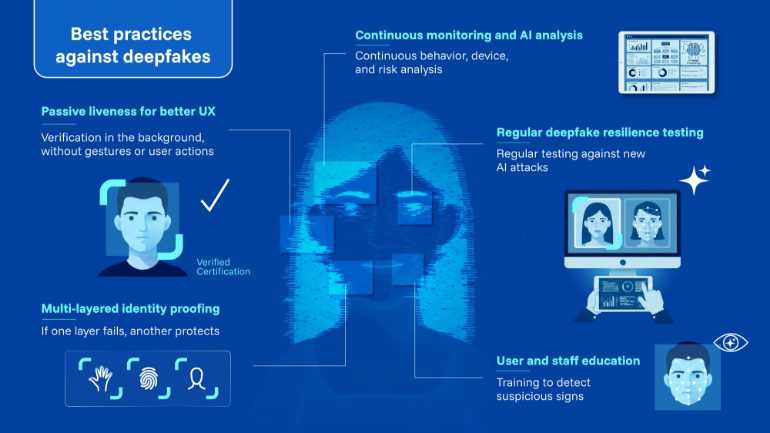

Best practices for biometric Deepfake detection in Fintech security

To maintain digital trust and meet compliance obligations, organizations in finance and government are adopting best practices to counter deepfake threats. Here are key strategies modern biometric security platforms employ for deepfake protection:

Multi-layered identity proofing

Robust platforms combine document authentication, biometric matching, and spoof detection in one flow. For example, an advanced onboarding system will scan the user’s ID for signs of tampering, match the face on the ID with a live selfie, and run both liveness and deepfake detection on that selfie. Multiple checks create overlapping shields ,even if a deepfake slips past one layer, another can catch it. This layered approach aligns with regulators’ guidance to use risk-based, multi-factor verification (e.g. some jurisdictions now demand a mix of photo ID, biometric check with liveness, and even phone or IP metadata analysis for high-risk cases).

Passive liveness for better UX

User experience remains a priority, since too much friction can drive away legitimate customers. The latest solutions emphasize passive liveness detection, which works in the background without requiring users to perform awkward movements. AI analyzes the video feed for authenticity cues (like texture, moiré patterns, 3D depth) in a split second, typically as the user simply looks at the camera. This passive security approach, used by platforms like Identy, keeps the verification process seamless while still ensuring the person is real. When passive liveness is coupled with deepfake scanning, the security is “invisible” to honest users ,they complete onboarding in seconds ,but attempted spoofers are quietly flagged for review or rejection. Striking this balance between security and convenience is crucial for fintech adoption, where drop-off rates can hurt business. A strategic solution will thus “trust but verify” every onboarding attempt silently, only escalating when something looks suspicious.

Continuous monitoring and AI analysis

Biometric checks shouldn’t stop after account opening. Forward-looking security teams deploy ongoing fraud monitoring that can detect deepfake usage even after onboarding. This might include device intelligence (to spot emulator or virtual camera use), behavioral analytics, and transaction monitoring tied to biometric re-authentication for high-risk actions. For instance, if an account suddenly initiates a large transfer via a video-authorized transaction, the system can prompt a fresh liveness + face check and scan for deepfake signs before approval. Modern platforms often integrate these checks with fraud databases, if a certain face or device was associated with a known deepfake fraud attempt elsewhere, it can be automatically blacklisted or subjected to enhanced verification. Collaboration through shared anti-fraud intelligence is emerging as a best practice, so that when one bank catches a deepfake attacker, others are alerted to watch for the same signals.

Regular deepfake resilience testing

Given how fast deepfake technology evolves, banks and vendors must regularly evaluate their systems against new attack techniques. This includes conducting deepfake biometric testing in controlled environments ,essentially war-gaming the system with generated fake faces and voices to see if any slip by. Some institutions partner with security firms or participate in industry tests (for example, iBeta labs and independent assessors now test biometric systems for resistance to deepfake videos as part of certification). By proactively testing and tuning, organizations can patch vulnerabilities before criminals discover them. Additionally, staying updated with the latest standards (like ISO/IEC 30107 for biometric presentation attack detection, and its newer extensions addressing AI attacks) ensures the platform meets the highest security benchmarks.

User and staff education

Finally, a soft but important measure is raising awareness about deepfakes. Frontline compliance staff should be trained to spot signs of potential deepfake usage in manual reviews (e.g., if an applicant’s video feed has unnatural eye movements or if an interviewee’s video lags oddly out of sync with audio). Customers too can be educated on verifying communications ,for instance, a bank could warn that it will never ask for certain actions over a video call, so a customer might question a scenario where a “bank officer” on video looks real but is pressuring an unusual transaction (a scenario that has happened with CEO fraud deepfakes). Building a culture of “digital skepticism” around unexpected biometric requests can add an extra layer of defense against social engineering that employs deepfakes.

Safeguarding digital identity in the Deepfake Era

Deepfakes represent a fast-emerging threat to digital security, especially in high-trust sectors like finance and government. For CMOs, this threat translates to a potential erosion of customer trust, if users fall victim to fraud due to a deepfake bypass, the brand’s reputation for security can plummet. For CTOs and Security Heads, deepfakes are a call to action to bolster systems now, rather than later, because the cost of inaction is measured in fraud losses, compliance penalties, and damaged public confidence. The strategic solution is to invest in advanced biometric security platforms that offer deepfake protection by design. Platforms such as Identy exemplify this approach by combining biometric liveness and deepfake detection to verify identity with a high degree of assurance.

They do so while keeping the process user-friendly and scalable, an important factor for fintech onboarding or large government ID programs where millions of users may be verified remotely. In summary, combating deepfake attacks on biometric verification requires a combination of cutting-edge technology and prudent policy. On the technology side, multi-factor and AI-enhanced verification, face biometrics paired with robust liveness and deepfake screening , has become the gold standard for defending against AI-generated impostors. On the policy side, organizations should stay abreast of evolving guidelines (from bodies like NIST and ISO) and foster a security-aware culture.

The arms race between deepfake creators and biometric defenders is underway, and it will likely intensify. But with a proactive, layered approach, institutions can preserve digital trust and confidently authenticate their users even as generative AI transforms the threat landscape. The message for leadership is clear: deepfake fraud is no longer tomorrow’s problem ,it’s here today, and investing in the right defenses now is paramount to staying one step ahead of cybercriminals and maintaining the integrity of biometric identification systems.

Get a tailored demo of our contactless biometric platform and see how it fits your specific use case.

Bibliography

Biometric Update ,“Google allows biometrics for YouTube likeness detection to be used in AI training” (Joel R. McConvey, Dec 2025).

FinCEN Alert ,“Fraud Schemes Involving Deepfakes and Generative AI” (FIN-2024-Alert004, Nov 2024).

FinCEN Alert, FIN-2024-Alert004, November 13, 2024. https://www.fincen.gov/system/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf