Table of Contents

ToggleWe are living at the dawn of the age of artificial intelligence (AI), a technology that, since its widespread adoption in 2021, is revolutionizing everything at an astonishing speed. From entertainment to productivity, AI is everywhere, and among the tools that are having the greatest impact are deepfakes, especially in the field of identity fraud. So, what are they and what impact can they have on society?

The Era of Synthetic Reality

We have already seen videos go viral in which public figures (especially politicians) are shown saying something they never actually said, with all the dangers that this can entail. Of course, this is multimedia content that has been manipulated (or generated from scratch) using artificial intelligence, with the added danger that it looks increasingly authentic. That’s a deepfake, a term that comes from the combination of “deep learning” and “fake.”

A standard definition of deepfake refers to audiovisual content (whether image, audio, or video) generated or modified using advanced artificial intelligence techniques, specifically deep learning, with the aim of realistically imitating the appearance, voice, or gestures of a real person.

The technology behind these videos is neural networks, specifically GANs (Generative Adversarial Networks), where two models complement each other to create a video that is realistic to the human eye. On the one hand, there is the Generator, which creates the synthetic content. On the other hand, there is the Discriminator, whose mission is to evaluate the content and detect whether it is real or not.

Deepfakes, a threat to identity

The many different AI tools are proving to be a double-edged sword, with many high-value applications, but also representing a potential danger depending on who is using them. Thus, although deepfakes have positive applications mainly in the film and visual effects industry or in education/museums (to bring historical figures back to life), their fraudulent uses are much more notable.

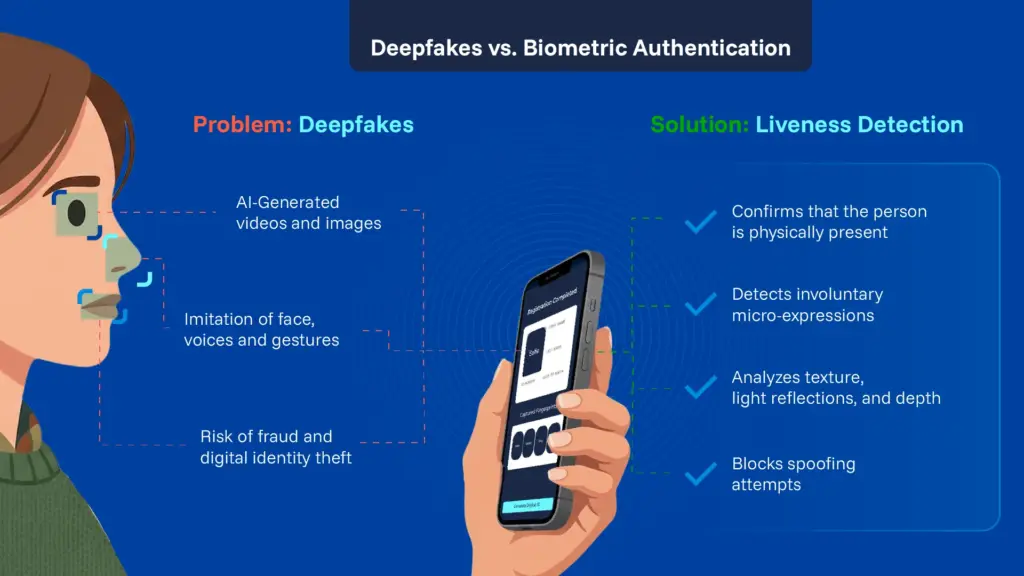

Deepfakes can be used for a variety of criminal activities, such as disinformation or even manipulation, but one of the most frequent and worrying is identity spoofing and fraud.

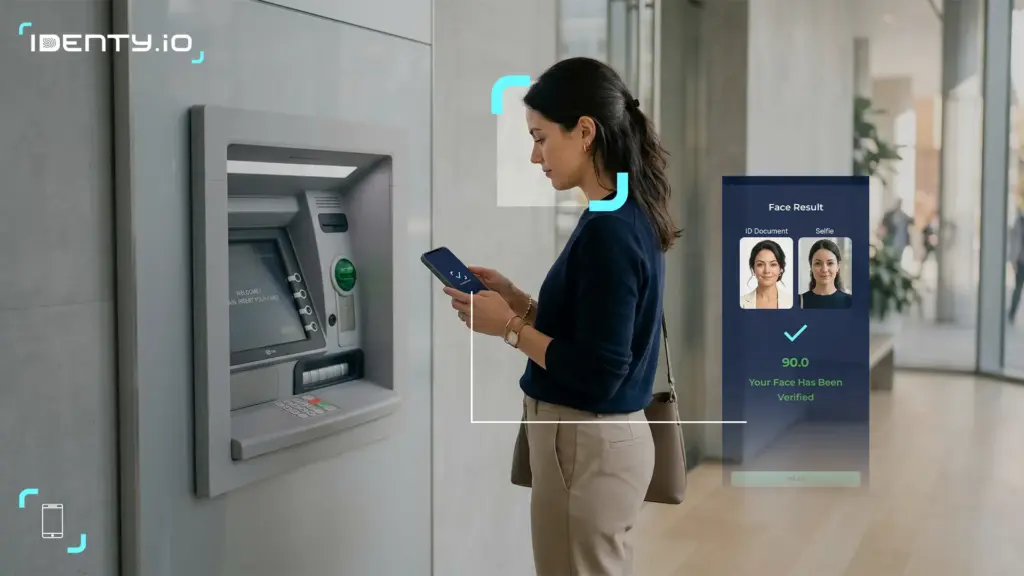

As traditional, insecure forms of verification, such as passwords and codes, have been left behind, biometrics has emerged as a fundamental tool for adding layers of security, becoming the standard for identity verification. However, cybercriminals have taken advantage of the emergence of advanced technologies such as deepfakes to bypass and deceive standard biometric verification measures.

The key tool against deepfakes: liveness detection

In this context, one tool stands out as the most effective defense against deepfakes: liveness detection. This technology confirms with almost complete certainty that the person appearing in front of the camera is real and physically present at the time of identification. If the system detects any anomaly that could indicate otherwise, it automatically rejects the verification.

This liveness test has two modes: Active liveness detection, which requires the user to interact with the system, for example, by blinking, smiling, turning their head, or following an instruction. This adds a challenge-response layer to prove that the face is alive.

Passive liveness detection, on the other hand, does not require the user to perform any action; it simply analyzes the person’s image for signs of life.

Identity.io’s passive liveness technology uses AI models that are trained to detect patterns which unequivocally identify a video as real. These patterns include structure and depth, since human faces naturally have depth that varies as the person or camera moves from angle to angle. Another analysed aspect is texture and light reflection because our skin reflects light in a complex and organic way that is difficult to replicate. Finally, the algorithms can detect micro-expressions and involuntary movements that real faces display, but which are not present in artificial ones.

Companies and institutions must bear in mind that the risk of deepfakes is very real and present, and will continue to grow as the algorithms used to create them become more sophisticated and the technology more accessible. It will be vitally important to implement biometric measures using liveness detection to ensure totally secure identification.